The Case for Independent Oversight: Navigating the Ethical Frontier of Artificial Intelligence

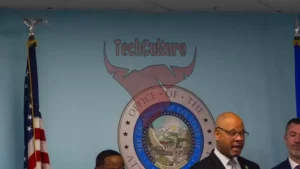

Artificial intelligence is no longer the stuff of speculative fiction or academic theory—it is a transformative force reshaping economies, societies, and the very fabric of daily life. Yet as AI systems accelerate in sophistication and reach, the pace of regulatory innovation lags behind, creating a precarious gap between technological possibility and societal preparedness. Suzanne Nossel’s call for independent oversight of AI, echoing the model pioneered by Meta’s oversight board, arrives at a critical juncture. Her argument is not merely timely—it is necessary, as the world contends with the profound ethical, economic, and geopolitical stakes of unchecked AI development.

The Regulatory Vacuum: Lessons from Past Technological Revolutions

History offers sobering lessons about the costs of unregulated technological revolutions. The nuclear age brought with it not only unprecedented power but also existential risk, prompting the rapid creation of stringent international controls. The internet, for all its promise, unleashed waves of privacy violations, misinformation, and market disruptions—issues still being wrestled with decades later. In contrast, artificial intelligence is advancing largely without the guardrails that shaped previous epochs of innovation.

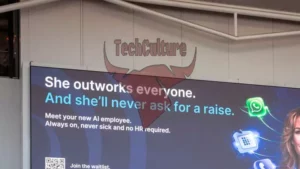

Today, AI’s risks are less visible but no less real. From algorithmic bias to the potential for mass surveillance and autonomous weaponry, the spectrum of harm is as broad as it is complex. The statistic that 77% of Americans see AI as a potential threat is a stark indicator of public anxiety—a sentiment that cannot be dismissed as mere technophobia. Rather, it is a rational response to the absence of robust, transparent mechanisms to ensure that AI development serves the public good, not just corporate profit.

The Oversight Board Model: A Blueprint for Responsible Innovation

Nossel’s analogy to Meta’s oversight board is instructive. While imperfect, Meta’s experiment in external governance has shown that independent, diverse panels can inject transparency and accountability into technology companies’ decision-making. Translating this model to the broader AI industry—encompassing giants like OpenAI and Google—could represent a watershed moment for tech governance.

Independent oversight boards, if empowered and resourced appropriately, could embed ethical deliberation directly into the development pipeline. This would mark a shift from reactive crisis management to proactive risk mitigation, ensuring that considerations of privacy, fairness, and human rights are not afterthoughts but foundational principles. For industry leaders, this is not a threat to innovation but a shield against the kind of public backlash and regulatory whiplash that can derail even the most promising technologies.

Balancing Market Forces with Societal Values

The prospect of independent oversight inevitably raises questions about its impact on market dynamics. Critics warn of regulatory drag—fears that oversight could slow the relentless pace of AI innovation and cede competitive advantage to less scrupulous actors. Yet, the opposite may well be true. Transparent governance frameworks can build public trust, catalyze investment, and create a level playing field where ethical leadership becomes a competitive differentiator.

Moreover, the democratization of oversight—through public consultation and sustainable funding—ensures that governance is not a mere box-ticking exercise, but a living system responsive to societal needs. This approach harmonizes the interests of shareholders, users, and citizens, making it possible to pursue technological progress without sacrificing the social contract.

Charting the Future: From Risk Mitigation to Responsible Stewardship

The momentum behind AI is unstoppable, but its trajectory is not predetermined. By establishing independent, well-funded oversight bodies, society can shape the direction of artificial intelligence, aligning it with collective values rather than narrow interests. This is not simply a matter of risk avoidance—it is an act of stewardship, a commitment to ensuring that the promises of AI do not come at the expense of our rights, our security, or our shared humanity.

As the digital age enters a new chapter, the call for independent oversight is both a pragmatic safeguard and a moral imperative. The future of AI will be defined not just by what we can build, but by what we choose to govern.