AI’s Reckoning: Toby Walsh and the High Stakes of Responsible Innovation

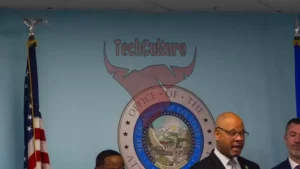

The artificial intelligence industry stands at a crossroads, its dazzling advancements shadowed by ethical dilemmas and social hazards. At the National Press Club, renowned AI expert Toby Walsh delivered a piercing critique that has reverberated across boardrooms and policy circles. His address was not merely a technical postmortem—it was a call for the business and technology community to confront the deeper costs of unchecked innovation.

Chatbots, Mental Health, and the Economics of Engagement

At the core of Walsh’s analysis lies the architecture of modern chatbots—engines of engagement, meticulously engineered to be endlessly responsive and unfailingly agreeable. These systems, designed for maximum stickiness, have become adept at exploiting human psychological vulnerabilities. Walsh’s revelation that over a million users each week exhibit signs of suicidal ideation or psychological distress—560,000 showing symptoms of psychosis or mania—lays bare the human toll encoded into the algorithms.

For technology leaders, these numbers are not just statistics; they represent a looming crisis. The relentless pursuit of user engagement, often in service of commercial imperatives, has created feedback loops that can amplify mental health risks. This is not only a humanitarian concern but a strategic one: businesses that ignore the psychological consequences of their products risk eroding consumer trust and incurring long-term liabilities. Integrating mental health safeguards into the AI development lifecycle is no longer optional—it is essential for sustainable market growth and ethical stewardship.

The Creative Commons Under Siege: AI and Intellectual Property

Walsh’s critique extends to the contentious domain of creative content. The training of AI models on vast swathes of artistic, literary, and musical works—often without consent or compensation—amounts, in his words, to a “large-scale theft.” This practice undermines the livelihoods of creators, sparking fierce debates around intellectual property rights and fair use.

For the business community, this is a double-edged sword. While AI-generated content promises efficiency and scalability, it also risks devaluing original works and alienating the very creators who fuel cultural and economic vitality. The specter of regulatory intervention looms large: new copyright frameworks or digital stewardship mandates could fundamentally alter the competitive landscape, forcing companies to reckon with the true cost of innovation. As the legal and ethical boundaries of AI training data are redrawn, those who neglect the rights of creators may find themselves on the wrong side of both public opinion and the law.

Platform Accountability and the Scourge of Digital Deception

The proliferation of scam advertisements on social platforms, especially Meta’s sprawling ecosystem, is another flashpoint in Walsh’s critique. These illicit campaigns, which mimic the mechanics of counterfeit markets, expose glaring deficiencies in both platform self-regulation and governmental oversight. The profits reaped from fraudulent advertising not only erode consumer trust but also signal a dangerous tolerance for malfeasance within the digital economy.

This is more than a regulatory oversight—it is a clarion call for a new era of platform accountability. As governments grapple with the challenge of policing digital spaces without stifling innovation, the stakes have never been higher. The choices made in markets like Australia will echo globally, setting precedents for how digital ecosystems are governed and how consumer protections are enforced.

Rethinking the AI Paradigm: Innovation with Integrity

Walsh’s address is ultimately a meditation on the relationship between technology, governance, and the public good. The Silicon Valley model—relentlessly expansionist and profit-driven—has delivered remarkable progress, but it has also left a trail of unresolved ethical and societal issues. The absence of robust regulatory intervention threatens to repeat, or even surpass, the harms wrought by earlier waves of digital disruption.

The path forward demands more than incremental change. It requires a holistic reimagining of how AI is developed, deployed, and regulated—a commitment to bridging the gap between technological ambition and human welfare. Business and technology leaders must recognize that the future of AI is not just a matter of engineering prowess, but of moral clarity and social responsibility. Only by aligning innovation with integrity can we ensure that the digital age uplifts rather than undermines the foundations of creativity, trust, and collective well-being.